Game theory is the study of mathematical models of strategic interaction among rational decision-makers. The ‘toy games’ which are a feature of this study are used in fields like economics, social sciences and in theories of conflict.

Game theory originated in the study of zero-sum games. In these games, the gains or losses of each participant are balanced by the gains or losses of the other participants. More recently, game theory has been used to describe and explain a much wider range of interaction behaviours. The term is now used to refer generally to the scientific study of logical decision making in humans, animals, and computers.

Toy games – matching pennies

The toy games which are of particular interest when looking at sustainability and environmental issues are:

- The Prisoner’s Dilemma

- Security Dilemma

- The Tragedy of the Commons

The assumption is made in these games that all players will act as rational decision-makers, that is, that they will act to maximise their own return.

In simple toy games of pure conflict, we can pitch one rational actor against another. ‘Matching Pennies’ is one such game.

‘Even’ and ‘Odd’ are matching pennies. Each secretly turns a penny in their pocket, and they reveal them simultaneously. ‘Even’ wins if the pennies match; ‘Odd’ wins if they don’t. This is a zero-sum game in that one person’s loss exactly equates to the other’s gain.

Even’s strategy

Odd’s strategy

The idea of a ‘strategy’ in this case is somewhat redundant. Since each player has an equal probability of choosing heads or tails and does so at random, neither Odd nor Even has any reason to play Heads (or Tails), as a ‘best response’ to their opponent’s move. Another way of stating this is to say that there is no pure strategy Nash Equilibrium in this situation.

Nash Equilibrium

A Nash Equilibrium occurs where no player has an incentive to deviate from his chosen strategy after considering an opponent’s choice. In other words, there is a fixed ‘best response’ to the game for each player. The Nash Equilibrium is the result which emerges from both players playing their ‘best response’.

Minimax and Maximin

Rational actors will seek to maximise their own minimum gain or minimise their maximum loss (or minimise the maximum gains of the other players). These are the Maximin and Minimax strategies put forward by Von Neumann. The Nash Equilibrium occurs if these rational choices lead to a best response from each player. This best response is known as a ‘dominant strategy’.

See the ‘mixed outcome’ game below. It is a mixed outcome game because, unlike a zero-sum game, where the losses of one player equal the gains of another, the gains of one player can be different to the losses of the other.

N.B. in the payoff table below the first number in each pair is Alice’s (row player) payoff, the second is Bob’s (column player).

Alice has three choices she can make (a, b, or c). Her Maximin value is the highest value she can be sure to get without knowing what Bob will play. In this payoff table that is 2, by playing ‘a’. If she plays either ‘b’ or ‘c’, she risks scoring a negative value.

If she plays a Maximin strategy she will choose ‘a’ as her play.

Her Minimax value is the minimum value that Bob can force her to achieve, in the case that Bob does not know what she will do. In this payoff table, her minimax value is 4 (if Bob plays ‘X’, she can get 5, if ‘Y’, 4).

Bob’s Maximin strategy is to play ‘X’, which means he can achieve a minimum of 0. Playing ‘Y’ would put him at risk of getting -35. Bob’s minimax value is 1. If Alice plays ‘a’ that is the minimum he can achieve, if ‘b’, 2, and if ‘c’, also 1.

If both players play their Maximin strategy the payoff will be (3,1)

Another way of explaining Minimax value is to say that it is the largest value the player can be sure to get when they know the actions of the other players. For example, Alice would only play ‘b’ to achieve her Minimax value of 4, if she knew that Bob had played Y, because she risks getting -60 by playing ‘b’ without that knowledge.

If the players are playing without knowledge, to minimise the maximum value for their opponents, Alice’s Minimax strategy would be to play either ‘a’ or ‘c’ (both have a minimax value of 1 for Bob).

Bob’s Minimax strategy would be to play ‘Y’ (for a minimax of 4 for Alice)

The payoff for Y,a is (2,-35) and for Y,c, (-15,1)

So without prior knowledge, in this mixed outcome game, each player is best playing their Maximin strategy.

The Nash Equilibrium in a zero-sum game

In the case of a zero sum game, the Nash Equilibrium is the same as the Minimax.

In playing this game Alice might reason thus:

“With ‘b’, I could lose 2 points but can win only 1, and with ‘a’ I can lose 0 but can win 4, so ‘a’ looks a lot better.”

Bob would reason in a similar way

“With ‘X’, I could lose up to 4 points, and with ‘Y’ I can lose 0 but can win 2, so ‘Y’ looks a lot better.”

The Nash Equilibrium is circled above. Alice has no incentive to change her decisions, even if she knows what Bob has chosen. If Bob chooses X, it is still best for Alice to choose ‘a’ because 4>0. If he chooses Y, it is still best for her to choose ‘a’ because 0 > -4.

Bob has no incentive to change either. If Alice choose ‘a’, it is best for Bob to choose ‘Y’ because 0> -4, and if she chooses ‘b’, it is best for him to choose ‘Y’ because 2>-1.

And we can also see that the Nash Equilibrium is the same as the Minimax solution. Alice will play ‘a’ to restrict Bob to maximum gain of 0 and Bob will play ‘Y’ to restrict Alice to a maximum gain of 0 also.

This could also be described in terms of the Nash Equilibrium (or minimax solution) being the ‘best of the worst case scenarios’. Clearly both of the players could have scored better, but their ‘best response’ results in them minimising their losses not maximising their gains.

Any game of pure conflict can be modelled as a zero-sum game – the win of one party can exactly equal the losses of the other – as we can see in the payout matrix above.

How do we decide on the payoff values?

When dealing with conflict and cooperation – games with mixed outcomes, we need a better way of describing player motivation. Economists invented the idea of utility. This allows us to express the outcomes of a game with a numeric value. Imagine that it works as a kind of utility scale – like the scale on a thermometer – a ‘util’.

This also answers the question, which might have been on your mind up until now, ‘Where do the values in the payout matrices come from?’ (regardless of whether are looking at zero-sum or mixed outcomes).

How do we assign a util value to a particular outcome? The number of utils that any outcome is worth equals the size of the risk someone is willing to take to attain it. How many utils will Alice assign to passing a particular exam?

- First decide your scale, e.g. 0 to 100 where 0 is assigned to the worst outcome Alice might encounter and 100 to the best.

- Next offer Alice a series of (free) lottery tickets where the only possible prizes are either the best or worst outcome and ask her if she would swap it for passing her exam.

- Each successive ticket has a better percentage chance of achieving the best outcome than the preceding one

- If the ticket which makes her say ‘yes’ has a 75% chance of her winning the best outcome, we say that passing the exam is worth 75 utils to her.

Battle of the Sexes

To demonstrate, consider the following game of mixed motivation ‘Battle of the Sexes’. Alice and Bob are arguing over what to do on Saturday afternoon. Alice wants to go to a Star Trek convention. Bob wants to go the Ballet. They are still arguing and have not made a decision when they get accidentally separated. They now have to independently make their way to the Ballet or the Star Trek Convention, with no knowledge of what the other will do. For both of them, the best outcome is that they get to do their preferred activity, AND be in the company of the other person, but even if they have to do their least preferred activity, they attach some utility to being with the other person. There is no utility attached to being alone- even in the case that they are doing their preferred activity. Awwww – sweet, ain’t it?

There are two pure strategy Nash Equilibria here, which are circled. Alice’s best strategy is on the left and Bob’s on the right. There are two justifications for why Nash Equilibria will always emerge from games with payoffs like these

Rational explanation – Suppose a book existed with a list of all games and an authoritative recommendation on which how each game should be played. Each solution would have to be a Nash Equilibrium or it would be rational for at least one player to deviate from the advice and it would no longer be authoritative.

Evolutionary – Processes that favour fitter strategies against less successful ones, can only stop when a Nash Equilibrium is reached, because only then will all the strategies be as fit as they can be.

Let us now look at some ‘toy games’ with particular relevance to sustainability. Firstly, we will look at probably the most famous ‘toy game’ – The Prisoner’s Dilemma

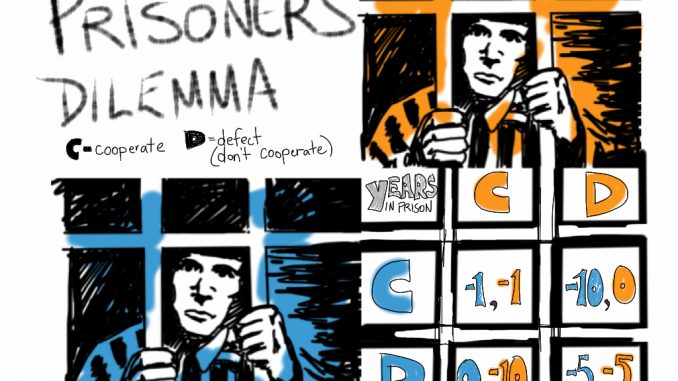

The Prisoner’s Dilemma

This game explores why rational actors may not cooperate with each other, even when it is in their best interest to do so.

Two alleged criminals – members of the same gang – are arrested and taken in for questioning. Each prisoner is in solitary confinement and cannot communicate with the other. The police do not have enough evidence to charge either with the more serious offence which they believe they have committed, but they have enough to convict both on a lesser charge. Simultaneously, the police offer each prisoner a bargain. Each prisoner is given the opportunity either to betray his gang-mate by testifying that the other committed the crime, or to cooperate with the other by remaining silent. The possible outcomes are:

- If A and B each betray the other, each of them serves two years in prison

- If A betrays B but B remains silent, A will be set free and B will serve three years in prison (and vice versa)

- If A and B both remain silent, both of them will serve only one year in prison (on the lesser charge).

Although both players would clearly do best by co-operating, the rational choice for each is to betray, because the risk of co-operating is too great in the case that the other betrays. As a result, the Nash Equilibrium (circled) ensures that each prisoner receives a sentence of 2 years – worse than the outcome if they had cooperated.

The story of the Prisoner’s Dilemma is, of course, incidental – what makes the PD into the PD is the payoff table associated with it. The Nash Equilibrium will always emerge because the payoffs will make it happen. If you don’t end up at an NE then you aren’t playing the PD.

In the game, there are a number of assumptions which have to hold in order for it to play out as it does. The players are ‘rational actors’. Prisoners have no way of rewarding or punishing each other, other than the prison sentences. Furthermore, their future reputation is unaffected by the single decision they make. Under these assumptions, betraying a partner offers a greater reward than cooperating with them, and therefore rational actors will always ‘betray’.

In reality, things are much less bleak. Humans do not always act as ‘rational actors’ and display a systemic bias towards co-operative behaviour.

Security Dilemma

A related game is ‘Security Dilemma’, which has been used to demonstrate aspects of the arms race /disarmament. In this case, the payoff are based on two criteria, cost and security. There is a financial cost in maintaining a nuclear deterrent, but if you have a deterrent and your ‘enemy’ does not, your country is more secure.

The payoff table reflects this by showing that the outcome where both countries choose to be ‘Hawks’ – to maintain a deterrent, they neither do as well as if they had both decided to disarm (Dove). Both of these decision pairs (Dove, Dove) and (Hawk, Hawk) give equal payouts, as both countries are now equally ‘secure’, but (H, H) is a less good option, because both countries are incurring major expense.

The worst outcome for each country is that they opt to be Doves while the other player opts to be a Hawk. The Nash Equilibrium for this game is therefore the (H, H) decision with a (2, 2) payout. But countries do unilaterally disarm, so again, although these kinds of games are useful, they are too simplistic to represent reality in complex situations.

The Tragedy of the Commons

The Tragedy of the Commons is a game with high relevance to the challenges of environmental sustainability. It is also a game, which plays out with great fidelity in the ‘real world’, in the way that humans choose to exploit (or conserve) ‘common goods’ such as land, freshwater or the oceans.

A commonly given example is of a piece of common land on which a number of farmers are allowed to graze their sheep. The land is capable of supporting 20 sheep, and each of 10 farmers take two of their sheep to the common daily to graze.

All is well. The common is able to support this level of use, and the grass grows back at an appropriate and sustainable rate.

However, if each farmer behaves as a rational actor, it is in his interests to overexploit the common. The utility he will gain by bringing an extra sheep to the common will far outweigh any negative effects on him. As he alone profits from the value of the additional sheep, in wool or meat, the utility will be very near to +1. That this will just a short-term gain is largely immaterial unless some kind of conservation intervention is made which prioritises the utility for the many over the utility for each individual.

Without such intervention the common will be overused and become less useful to everyone, or even be totally destroyed.

This can be modelled as a toy game with a payoff matrix as above, but this can only work in quite simple cases. For example, we can create a matrix where only two farmers are using the common, and show the relative utility depending on how many animals each brings to the common. This in effect is like ‘playing’ the tragedy of the Commons as if it were The Prisoner’s Dilemma. Such a matrix might look like this. This is a much smaller common than the one mentioned above, where it is best to only graze two sheep.

It would be optimal (collectively) in this case, for each farmer to graze only one sheep, but individually it is best for each to bring two sheep, thus creating a Nash Equilibrium where each ends up with a less well-fed sheep

Modelling the Tragedy of the Commons as the Prisoner’s Dilemma is not without its problems. This is because this is not actually a problem which can be described in terms of a dominant strategy. This is because the strategy of each beneficiary of a common is reliant on what the others do. If you remember from the above, a dominant strategy is dominant only because it is independent of the decisions of other players.

However, more complex mathematical models of problems such as the Tragedy of the Commons are beyond the scope of this brief overview of toy games in game theory, and the Prisoner’s Dilemma approximation of the Tragedy of the Commons does provide a useful first look at the underlying principles of the problem.

- James Bore – The Ransomeware Game - 13th February 2024

- Ipsodeckso – Risky Business - 23rd January 2024

- Review – Luma World Games - 15th December 2023

Be the first to comment